Everyone complains that AI is unreliable. Sometimes it is fantastic, other times it is useless. But most people are looking in the wrong place.

The problem is rarely the model. It is a combination of wrong expectations, missing context, and tasks that simply do not fit the technology. AI is not a universal solution — it is a specialized tool with very clear strengths and equally clear limitations.

This article is an honest guide. No buzzwords, no overhyped promises. Just a pragmatic overview of what AI can actually do — and what you should stay away from.

Where AI is surprisingly strong

Let us start with the positive. Within specific domains, AI delivers results that would have required an entire team just a few years ago:

- • Text processing and summarization: AI is exceptionally good at reading large amounts of text, extracting key points, and restructuring content. Meeting notes, report summaries, email drafts — here AI saves hours, not minutes.

- • Data analysis and pattern recognition: Give AI a CSV file with sales data and it finds patterns you did not know existed. Excel analysis that takes an analyst a day, AI can do in seconds — and often better.

- • Coding and technical problem-solving: AI coding assistants like Claude Code and GitHub Copilot significantly accelerate software development. They are particularly strong at explaining existing code, finding bugs, and generating boilerplate.

- • Classification and sorting: Customer inquiries, emails, documents — AI can categorize with high precision when given clear categories and examples to work from.

- • Language understanding and translation: AI understands nuances in language better than most expect. It can adapt tone, formality, and audience, and translate with quality that often exceeds traditional tools.

Where AI fails — and why

Here are the areas where AI consistently disappoints. Not because the technology is bad, but because the tasks hit fundamental limitations in how language models work:

- • Factually correct answers without sources: AI models do not 'know' anything — they generate probable answers based on patterns. Without access to verified sources, they hallucinate convincingly. This is not a bug, it is an architectural property.

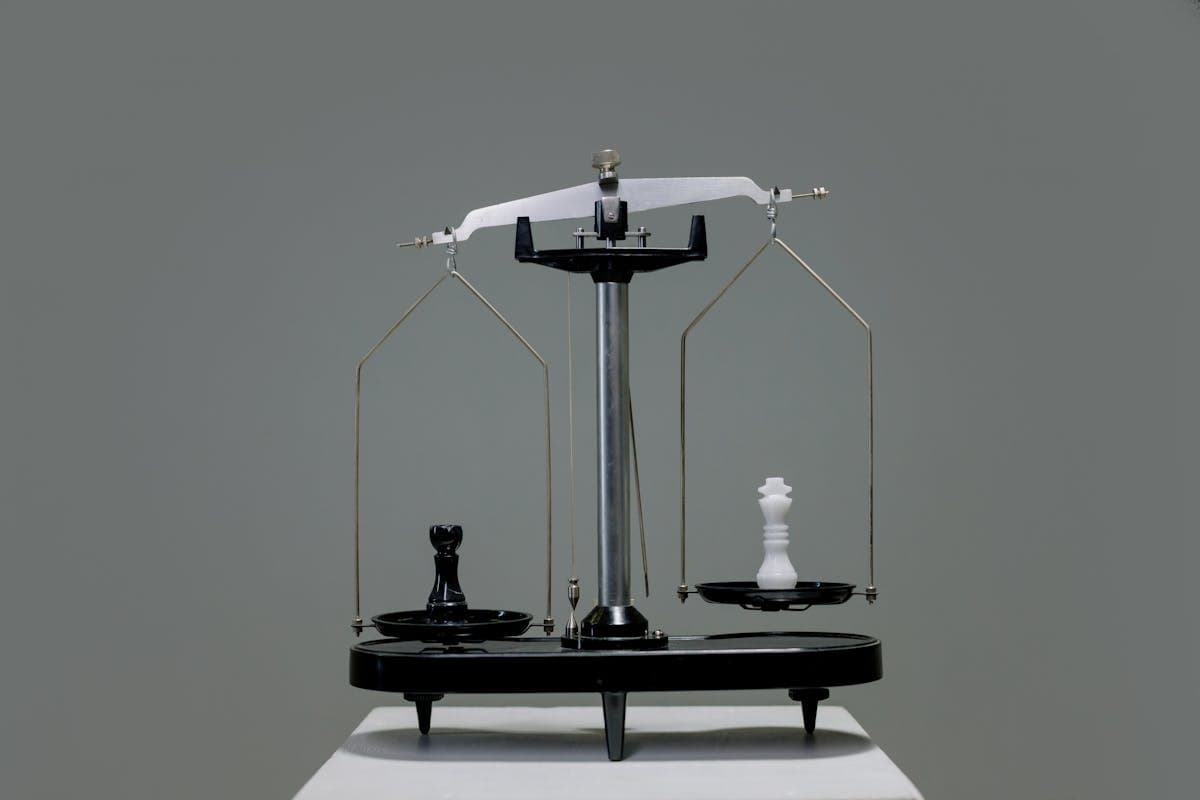

- • Originality and creative innovation: AI can remix and recombine existing ideas impressively well. But genuinely original ideas — the unexpected, the provocative, the human — it does not deliver. It is a skilled assistant, not a visionary.

- • Complex mathematics and logical reasoning: Despite improvements, AI still struggles with multi-step logic, advanced mathematics, and tasks requiring precise step-by-step reasoning. It sounds confident but often gets it wrong.

- • Long-term consistency: In longer conversations or projects, AI loses the thread. It forgets instructions from the beginning, contradicts itself, and produces output that deviates from what was agreed. This is the 'lost in the middle' problem in practice.

- • Assessments with consequences: Legal, medical, and financial assessments require accountability that AI cannot bear. It can assist, but never replace human judgment in decisions with real consequences.

The misconception that costs businesses the most

The most expensive mistake we see is companies treating AI as a black box: you ask a question and expect a perfect answer. When the answer is garbage, they conclude AI is overhyped.

But AI models have no internal knowledge, no memory, and no understanding of your context — unless you provide it. Technically, everything the model sees is the text sent in: your prompt plus whatever context you include. Nothing more.

This is why the same model can be fantastic in one system and useless in another. The difference is not the model — it is the context and data surrounding it. We dive deeper into this in our article on data readiness and context engineering.

When fluent language looks like understanding

Part of the problem is the interface. When a system responds in fluent, human-sounding language and displays a 'thinking' or 'reasoning' process, users intuitively start attributing understanding, intent, and judgment to it.

But what looks like reflection is often just more processing: the model breaks the task into more statistical guesses and produces a more persuasive explanation. That can improve the output, but it is not the same as deeper understanding of your business, your data, or the consequences of a mistake.

This is exactly why businesses can end up trusting the most well-formulated models too much. A confident tone and a polished reasoning trace can hide the fact that the answer still rests on uncertain assumptions. The conclusion should not be distrust of AI, but a design principle: the more human the system sounds, the more important it is to build workflows around verification, traceability, and human control.

Context beats prompt — every time

The AI industry is moving from 'prompt engineering' to 'context engineering'. The difference is fundamental:

Prompt engineering is about formulating the perfect question. Context engineering is about ensuring AI has access to the right documents, the right history, the right business rules, and the right context — before it even sees your question.

A customer service system that only sees the user's question gives generic answers. Give it order history, customer segment, previous inquiries, and relevant FAQ articles, and it gives answers that feel magically precise. The model is the same — the context is different.

For businesses, the implication is clear: you should invest at least as much in your data infrastructure as in your AI model. The best prompt in the world cannot save a model that does not have access to the right data.

A practical decision framework: Use AI here — avoid it there

Based on our experiences with businesses, we have developed a simple rule of thumb:

- • Use AI when: The task involves processing, sorting, summarizing, or transforming existing information. Here AI is typically faster and often better than humans.

- • Be cautious when: The task requires factually correct answers without verifiable sources. Here AI should assist, not decide — and always with human quality control.

- • Avoid AI when: The task requires original creativity, complex ethical assessment, or responsible decisions with legal or financial consequences. Here AI is a sparring partner, not a decision-maker.

Conclusion: Know the technology's limits — and exploit its strengths

AI is not magic, and it is not hype. It is an extraordinarily powerful tool within specific domains — and surprisingly fragile outside them.

The companies that succeed with AI are those that understand this difference. They use AI for what it is good at, ensure the right context and data, and keep humans in the loop where it counts. That is exactly the approach we build at Vertex Solutions — AI with governance, control, and human anchoring.

- • Match AI to the task type — not the other way around

- • Invest in data and context, not just models and prompts

- • Keep humans in the decision loop for tasks with consequences

- • Test AI on your actual use cases — not on demos

- • Understand the limitations and build systems that compensate for them