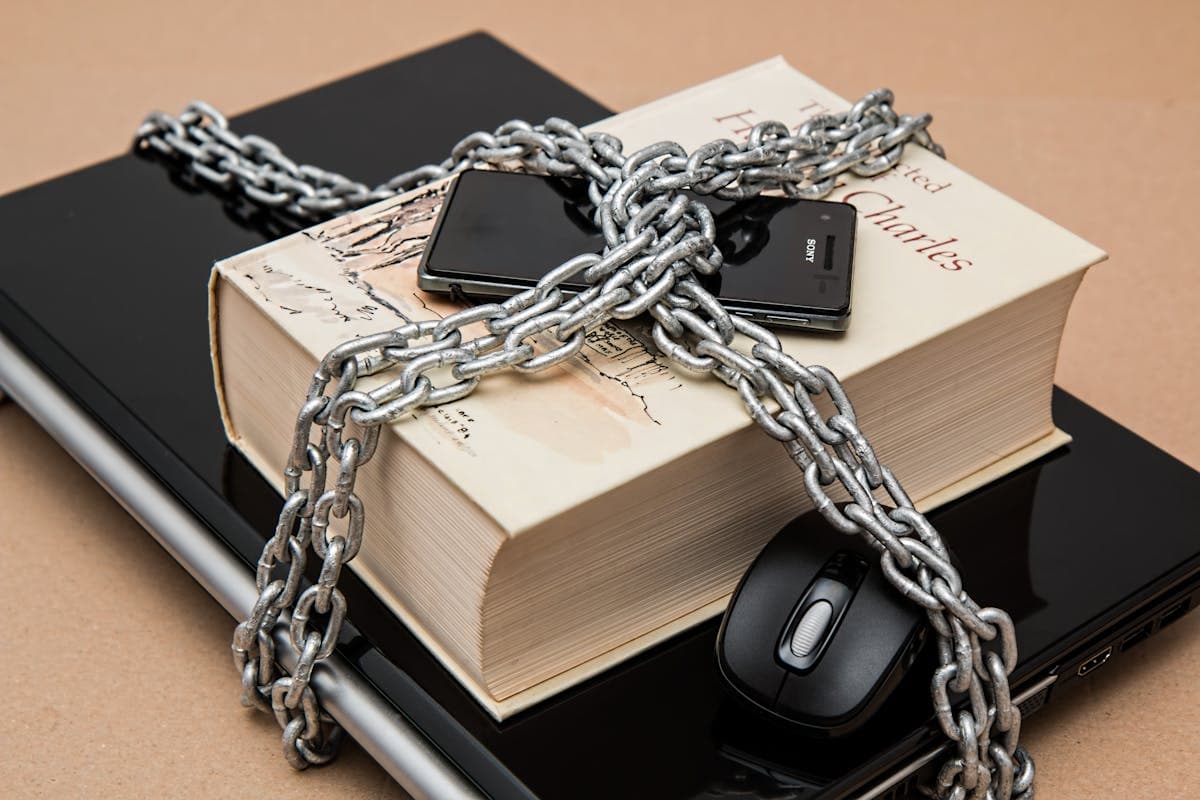

Two news stories in recent weeks have cast sharp light on a problem most organizations underestimate: the risk posed by your AI vendors.

The first: Pentagon threatens to classify Anthropic — the developer behind the AI model Claude — as a 'supply chain risk' because the company refuses to remove safety restrictions from their model. Pentagon wants full access without safeguards. Anthropic says no.

The second: A new report reveals that over 300 'skills' (extensions) for the popular AI agent OpenClaw are actually malware channels. They sound harmless — 'Summarise YouTube video', 'Analyze earnings report' — but trick the agent into downloading viruses.

For businesses, these stories are not exotic American news. They are a direct warning about the vendor risk that comes with AI agents in production.

The Pentagon case: When ethics and control collide

Anthropic has positioned itself as the 'responsible AI company'. They build safety mechanisms into Claude that prevent misuse. But Pentagon wants a model without limitations — for intelligence and military operations.

The dilemma is real: Should an AI vendor remove safety restrictions to satisfy a large customer? What happens when your AI vendor faces similar pressure — from a government, a regulator, or a dominant customer?

For businesses, the lesson is clear: You depend on your AI vendor's ethical decisions. If they choose to lower security to meet commercial pressure, it affects your data, your compliance, and your risk profile. And you likely have no influence over that decision.

The OpenClaw case: When the AI agent's ecosystem becomes the attack surface

OpenClaw is one of the world's most downloaded AI agents. It can access user files, send emails, check calendars, and perform a wide range of tasks autonomously. Its strength is its extensibility — users can add 'skills' from an open ecosystem.

But that very openness is also its weakness. Over 300 skills turned out to be designed to trick the agent into downloading malware. The skills exploit the fact that the agent has autonomy — it follows instructions regardless of whether they come from the user or from malicious code.

This is a concrete illustration of the 'lethal trifecta' we have previously described: the agent has access to data, it can be manipulated, and it can communicate externally. When all three properties are present, the attack surface is fundamental.

NIS2 and the supply chain: Your AI vendor is your responsibility

Under the NIS2 directive, which requires cybersecurity in the supply chain, your AI vendor is not an external party — they are part of your security perimeter. This applies whether you use an LLM via API, an agent platform like OpenClaw, or a no-code automation platform.

Specifically, this means you must be able to document:

- • Which AI vendors you use and what data they have access to

- • Where data is processed — and whether it leaves the EU

- • What security measures the vendor has implemented

- • What happens to your data if the vendor changes terms, is acquired, or shuts down

- • How you handle a security incident in the vendor's system

Four principles for AI vendor management

Based on recent incidents and our experience with AI implementations, we recommend four principles for vendor management:

- • Principle 1 — Vendor independence: Design your AI architecture so you can switch LLM providers without rebuilding the entire system. This protects against vendor risk and gives negotiating power.

- • Principle 2 — Minimal exposure: Never give an AI vendor more data than necessary. Use API calls with context limitations rather than full system access. Treat the vendor's model as an untrusted component.

- • Principle 3 — Control the agent ecosystem: If you use AI agents with extensibility (skills, plugins, integrations), each extension must be approved and validated internally — just as you approve software before it is installed on company machines.

- • Principle 4 — Contractual security: Ensure data processing agreements, SLAs for security incidents, and clear terms for what happens to your data when the agreement ends. Under NIS2, this is not just good practice — it is a requirement.

Conclusion: Your AI is only as secure as your weakest vendor

The Pentagon case and the OpenClaw scandal show two sides of the same problem: When you implement AI, you inherit your vendor's security decisions — and their vulnerabilities.

At Vertex Solutions, we build AI solutions with vendor independence as an architectural principle. Our agents can operate with different LLM providers, data is handled with least privilege, and every agent ecosystem is closed and controlled. It is not paranoia — it is responsible architecture.

If you use or are considering AI agents in your organization, the risk assessment does not start with 'which model is best?' — it starts with 'who has access to our data, and what can they do with it?'

- • Map all AI vendors in your chain — LLM providers, agent platforms, integrations

- • Assess each vendor's security practices and ethical position

- • Design for vendor independence — avoid lock-in to a single provider

- • Approve and validate all agent extensions internally

- • Document the supply chain as required under NIS2

- • Treat your AI vendor as part of your attack surface — not as a trusted partner